Indeed likes being fast. Similar to published studies (Speed Matters and Velocity and the Bottom Line), our internal numbers validate the benefits of speed. It makes sense: a snappy site allows job seekers to achieve their goals with less frustration and wasted time.

Application processing time, however, is only part of the story, and in many cases it is not even the most significant delay. The network time – getting the request from the browser and then the data back again – is often the biggest time sink.

How do you minimize network time?

Engineers use all sorts of tricks and libraries to compress content and load things asynchronously. At some point, however, the laws of physics sneak in, and you just need to get your data center and your users communicating faster.

Sometimes, your product runs in a single data center, and the physical proximity of that data center is valuable. In this case, moving is not an option. Perhaps you can do some caching or use a CDN for static resources. For those who are less tied to a physical location, or, like Indeed, run their site out of multiple data centers, a different data center location may be the key. But how do you choose where to go? The straightforward methods are:

Word of Mouth. The price is good and you’ve talked to other customers from the data center. They seem satisfied. The list of Internet carriers the data center provide seems comprehensive. It’s probably a good fit for your users … if you’re lucky.

Location. You have a lot of American users on the East Coast. Getting a data center close to them, say in the New York area, should help make things faster for the East Coast.

Prepare to be disappointed.

These aren’t bad reasons to pick a data center, but the Internet isn’t based on geography – it’s based on peering points, politics, and price. If it’s cheaper for your customer’s ISP to route New York through New Jersey because they have dedicated fiber to a facility they own, they’re probably going to do that, regardless of how physically close your data center is to the person accessing your site. The Internet’s “series of tubes” don’t always connect where you’d think.

What we did

In October of 2012, Indeed faced a similar quandary. We had a few data centers spread out across the U.S., but the West Coast facility was almost full, and the provider warned that they were going to have a hard time with our predicted growth. The Operations team was eager to look at alternate data centers, but we also didn’t want to make things slower for the West Coast users. So we set up test servers in a few data centers. We pinged the test servers from as many places as we could, comparing the results to the ping times of the original data center. This wasn’t a terrible approach, but it also didn’t mimic the job seeker’s experience.

Meanwhile, other departments were thinking about the problem too. A casual hallway conversation with an engineering manager snowballed into the method we use today. It was important to use real user requests to test possible new locations. After all, what better measure would there be to how users perceive a data center than those same users?

After a few rounds of discussion, and some Dev and Ops time, we came up with the Fruits Test, named for the fruit-based hostnames of our test servers. Utilizing this technique, we estimated that the proposed new data center would shave an average of 30 milliseconds off of the response time for most of our West Coast job seekers. We validated this number once we migrated our entire footprint to the new facilities.

How it works

First, we assess a potential data center for eligibility. It doesn’t make sense to run a test against an environment that’s unsuitable because of space or cost. After clearing that hurdle, we set up a lightweight Linux system with a web server. This web server has a single virtual host named after a fruit, such as lemon.indeed.com. We set up the virtual host to serve ten static JavaScript files, named 0.js, 1.js, etc., up to 9.js.

Once the server is ready, we set up a test matrix in Proctor, our open-sourced A/B testing framework. We assign a fruit and a percentage to each test bucket. Then, each request to the site is randomly assigned to one of the test buckets based on the percentages. Each fruit corresponds to a data center being tested (whether new or existing). We publish the test matrix to Production, and then the fun begins!

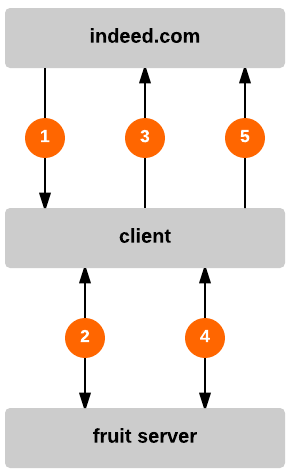

Figure 1: Fruits test requests, responses, and logging

|

Legend

|

Requests in the test bucket receive JavaScript instructing their browser to start a timer and load the 0.js file from their selected fruit site. This file includes a blank comment and an instruction to call the dcDNSCallback function. On lemon.indeed.com, it passes in "l" to indicate the test fruit:

/*

*/

dcDnsCallback("l");

dcDnsCallback then stops the previous timer, and sends a request to indeed.com, which triggers a log event with the recorded request latency.

The dcDnsCallback function serves two purposes. Since the user’s system may not have the fruit hostname’s IP address in its DNS cache, we can get an idea of how long it takes to do a DNS lookup and a single request round trip. Then, subsequent requests to that fruit host within this session won’t have DNS lookup time as a significant variable, making those timing results more precise.

After the dcDnsCallback invocation, the test selects one of the 9 static JavaScript files at random and repeats the same process: start timer, get the file, run function in the file. These files look a little bit like:

/*

3firaei1udgihufif5ly7zbsqyz59ghisb13u1j26tkffr7h67ppywg12lfkg7ortt5t3xoq5

*/

dcPingCallback("l");

These 9 files (1.js through 9.js) are basically the same as 0.js, but call a dcPingCallback function instead, and contain a comment whose length makes the overall response bulk up to a predefined size. The smallest, 1.js is just 26 bytes, and 9.js comes in at a hefty 50 kilobytes. Having different sized files helps us suss out areas where latency may be low, but available bandwidth is limited enough that getting larger files takes a disproportionately long time. It also can identify areas where bandwidth is plentiful enough that the initial TCP connection setup is the most time-consuming aspect of the transaction.

Once the dcPingCallback function is executed, the timer is stopped and the information about which fruit, which JavaScript file, and how long the operation took is sent to Indeed to be logged. These requests are all placed at the end of the browser’s page rendering and executed asynchronously to minimize the impact of the test on the user’s experience.

On indeed.com, the logging endpoint receives this data and records it, along with the source IP address and the site the user is on. We then write the information to a specially formatted logstore that Indeed calls the LogRepo – mysterious name, I know.

After collecting the LogRepo logs, we build indexes from them using Imhotep, which allows for easy querying and graphing. Depending on the nature of the test, we usually let the fruits test run for a couple of weeks, collecting hundreds of thousands or even millions of samples from real job seekers that we can use to make a more informed decision. When the test has run its course, we just turn off the Proctor test and shut down the fruit test server. That’s it! No additional infrastructure changes needed.

One of the nice things about this approach is that it is flexible for other types of tests. Sure, we mainly use it for testing new data center locations, but when you boil it down to its essentials (fruit jam!), all the test does is download a set amount of data from a random sampling of users and tell you how long it took. Interpreting the results is up to the test designer.

Rather than testing data centers, you could test two different caching technologies, or the performance difference between different versions of web or app servers, or the geographic distribution of an Anycast/BGP IP (we’ve done that last one before). As long as the sample size is large enough to be statistically diverse, it makes for a valid comparison, and from the perspective of the best people to ask: your users.

That’s nice, but why “Fruits Test”?

When we were discussing unique names to represent potential and current data center locations, we wanted names that were:

- easily identifiable to Operations

- a little bit obscure to users, but not too mysterious

- not meaningful for the business

As a placeholder while designing things, we used fruits since it was fairly easy to come up with different fruits for letters of the alphabet. Over the course of the design the names became endearing and they stuck. Now I relish opening up tickets to enable tests for jujube, quince (my favorite), and elderberry!

Now what?

Now that we have a pile of data, we graph the heck out of it! But more about that in Part 2 of the Fruits Test series.